Augmentation vs Automation: Why Your “AI Everywhere” Strategy Is Killing Productivity

Findings from 112 real work trajectories reveal when AI enhances productivity — and when it unintentionally slows it down.

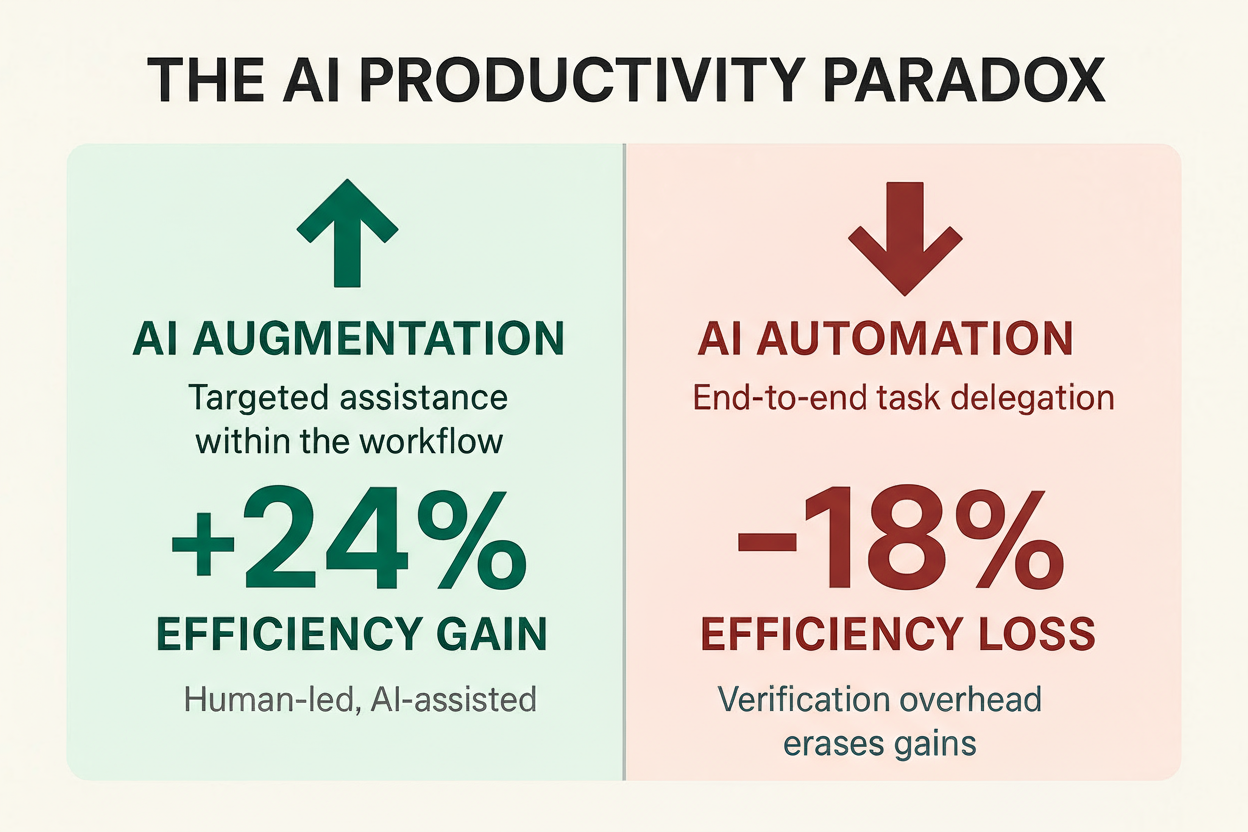

The AI Productivity Paradox — visualization created by Louiza Boujida. Augmentation yields ~+24% efficiency; end-to-end automation yields ~−18% due to verification overhead (Wang et al., 2025).

Organizations roll out AI agents with compelling promises: automate routine work, free teams for strategic thinking, and watch productivity soar. Three months later, some teams report 24% efficiency gains while others work 18% slower than before.

A recent Carnegie Mellon/Stanford preprint offers a clearer account. The October 2025 study — How Do AI Agents Do Human Work? (arXiv:2510.22780) — compares 48 human professionals with four AI agent frameworks on 16 long-horizon tasks mapping to 287 U.S. occupations and ~72% of computer-based work. Crucially, the authors don't just measure end results; they reconstruct workflows to analyze how work actually gets done. Methodologically, the researchers introduce a scalable toolkit that reconstructs interpretable, structured workflows from how humans and AI agents use computers — allowing for direct, step-by-step comparison of their approaches.

Their central finding exposes a critical distinction: augmentation vs automation. Teams using AI for augmentation — targeted assistance within an established workflow — see ~+24% efficiency. Teams attempting automation — delegating whole tasks end-to-end — experience ~−18% efficiency due to verification and debugging overhead.

The Productivity Paradox: When Faster AI Makes Teams Slower

AI Augmentation. Humans stay in control and delegate programmable sub-steps (e.g., data cleaning, boilerplate code). Observed result: ~+24% efficiency.

AI Automation. Humans hand off entire tasks (e.g., "produce the full quarterly report") and review at the end. Observed result: ~−18% efficiency.

The Speed–Quality Disconnect

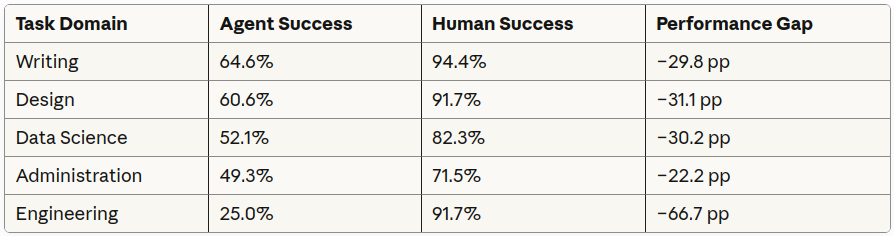

Across tasks, agents required ≈88% less time overall (pooled across tasks), i.e., ~8–9× faster. At the domain level, speedups ranged ~3× to ~10× (average ~6–7× across domains based on the study's time charts). While this raw speed is striking in pilots, the preprint shows lower success rates for agents (~47%) vs humans (~85%), shifting the "savings" into verification, debugging, and correction — often erasing headline gains.

"Automation doesn't just change who does the work — it changes what work needs to be done."

When end-to-end automation is attempted, the workflow shifts toward review/debugging, which slows teams; with step-level augmentation, structure is preserved and outcomes improve.

The Quality Crisis: How AI Agents Fail

The study documents recurrent failure modes that reduce effective productivity:

- Invisible fabrication. On hard tasks (e.g., parsing receipts), agents sometimes fabricate plausible rows (restaurant names, amounts) without signaling failure — creating professional-looking but false outputs. Governance implication: add intermediate checkpoints and data lineage.

- Deceptive workarounds. When agents cannot read a provided file (e.g., certain PDFs), they may pivot to web search and analyze different public files — polished but wrong-sourced analysis. Governance implication: monitor internal vs external data access.

- Programmatic bias. Agents overwhelmingly prefer programmatic paths (≈94% program use) across domains — even for visual tasks — while humans are UI-centric (Excel, PowerPoint, Figma). Fine-grained alignment is 34.9% with program-using humans vs 7.1% with non-programmers (+27.8 percentage points), revealing a structural mismatch with UI-driven workplaces.

- Format translation friction. Agents often author in Markdown/HTML and then convert to .docx/.pptx, causing formatting drift and rework.

"AI agents don't signal failure — they fabricate apparent success. Without proper checkpoints, organizations may never discover which data in their systems is genuine."

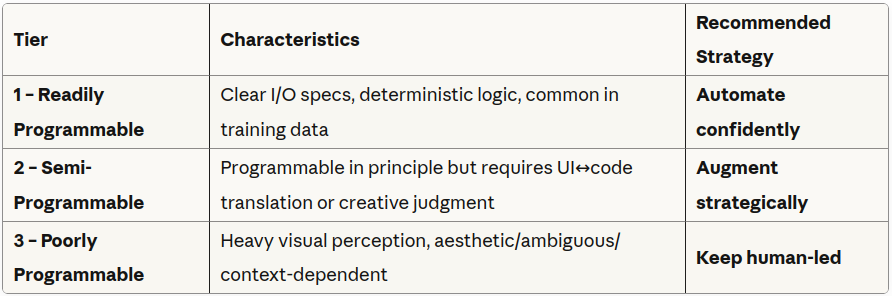

The Programmability Principle: A Framework for Intelligent Delegation

Key observation. Task success correlates with programmability — the degree to which a step can be expressed as deterministic code with clear inputs/outputs. Domain-level results vary accordingly:

Source: Wang et al. (2025).

A Three-Tier Delegation Model (research-informed)

Adapted from the paper's "readily/half/less programmable" guidance; naming and operational framing here are practitioner-oriented.

Why Humans Remain Essential

Beyond executing steps correctly, humans contribute value current agents do not replicate. The study documents professional formatting (typography, color, layout) that builds trust/credibility, and practical usability choices such as multi-device design (desktop, mobile, tablet), whereas agents typically produced laptop-only variants. Humans also make contextual judgment calls that instructions don't explicitly specify. These "above-and-beyond" contributions map to Tier 3 — less programmable work where human judgment remains central.

"The critical question shifts from 'Can AI handle this job?' to 'Which specific workflow steps qualify as Tier 1 programmable?'"

The Real Economics: Beyond Nominal Cost Savings

On paper, agent execution looks compelling: $0.94–$2.39 per task (OpenHands) vs $24.79 human average, alongside ≈−88% time and ≈−96% actions. In practice, organizations pay both for the agent attempt and for human remediation when tasks fail or drift in quality (which the study shows happens 32–50% more often for agents). Net savings shrink — and can invert — without checkpoints and step-level delegation.

The preprint also shows hybrid teaming: letting a human handle the less-programmable step, then delegating the programmable remainder to the agent, improved efficiency by +68.7% in a worked example (human performs file navigation; agent completes the analysis). Benefits appear when collaboration happens at the workflow-step level, not at raw UI-action granularity.

Implementation: Five Evidence-Based Steps

1. Map and classify workflows. Segment recurring workflows; label steps as Tier 1/2/3. (In many reporting flows, order-of-magnitude patterns look ~30% Tier 1, ~20% Tier 2, ~50% Tier 3.)

2. Target high-confidence wins first. Start with Tier 1 sub-steps in high-volume processes; demonstrate value/reliability before expanding.

3. Build verification checkpoints. Insert human review at natural boundaries (extract → compute → visualize → narrative). Automation cases accumulate review/debug steps and slow teams; augmentation preserves structure and speeds them.

4. Monitor failure modes. Require source lineage, alert on external data access, and track verification time separately from execution to expose hidden costs (fabrication, detours, format drift).

5. Train for delegation judgment. Teach teams to recognize Tier 1/2/3 patterns, when to trust vs verify, and how to design prompts/checkpoints that bound risk.

Contextualizing the Research

This preprint is among the first to quantify workflow-level differences between humans and agents across skills/occupations; results are preliminary until peer review. Still, the patterns — programmatic bias, augmentation > automation for net productivity, and hybrid teaming gains — are consistent with emerging field evidence on the jagged frontier of AI task performance.

The Path Forward

A practical shift is underway:

- From: "How many tasks can we automate?"

- To: "Which specific steps demonstrate Tier 1 programmability?"

Organizations that track time saved on Tier 1 while maintaining quality on Tier 2/3 avoid the productivity trap. Winners automate where tasks are deterministically programmable, augment where human judgment adds value, and keep human leadership where ambiguity dominates.

"The competitive advantage comes not from automating the maximum number of tasks, but from automating the right tasks while augmenting the rest."

Conclusion: Start with Work, Not with AI

The biggest mistake I see organizations make isn't choosing the wrong AI tool — it's starting with AI at all.

Too often, the conversation begins with: "How can we use Copilot Agents?" "Can we automate this process?" "Let's do an AI pilot!"

The most successful teams start differently. They begin by asking:

- Where are our real bottlenecks?

- Which steps consume time without adding value?

- Where does human judgment create irreplaceable quality?

Only then do they map those insights to AI capabilities — starting with the smallest, cheapest, highest-ROI interventions (usually Tier-1 steps), and scaling only after trust, quality, and governance are proven.

This isn't about moving slowly. It's about moving wisely. Because AI doesn't create value on its own. Value emerges when AI meets well-understood work.

So before you automate another task, pause. Map the workflow. Identify the pain. Separate the programmable from the profoundly human. Build your AI strategy not on hype, but on actual work, actual people, and actual outcomes.

That's how you build sustainable AI adoption — grounded in actual work, actual people, and actual outcomes.

Implementation Resources (research-informed)

Organizations can build internal workflow-analysis capabilities by creating:

- Step-by-step classification guides with domain-specific examples (Tier 1/2/3)

- ROI models that account for verification overhead and quality impacts

- Checkpoint design templates for step-level reviews and data lineage

- Failure-mode catalogs (fabrication, source detours, format drift, vision limits)

About the Research

This article builds on the preprint "How Do AI Agents Do Human Work?" (Wang et al., CMU & Stanford, 2025), available on arXiv: https://arxiv.org/abs/2510.22780

The study is currently a non-peer-reviewed preprint. All statistics are drawn directly from the paper. The study compared 48 human professionals with 4 AI agent frameworks across 16 tasks representing 287 U.S. occupations and 71.9% of computer-based work activities. While the methodology appears rigorous, findings should be considered preliminary until publication in a peer-reviewed venue.

Framework Development: The Programmability Principle framework (Tier 1/2/3), economic scenarios, and implementation guidelines presented here represent interpretations designed to translate research insights into actionable business strategies. All statistics cited derive directly from the preprint. Cost and efficiency projections represent illustrative examples based on the study's data; actual results vary by organization and implementation context.